Best Practices for Avionics Software Guidelines: Engineers & Managers

Download Full 10+ Page DO-178C Best Practices Whitepaper

In flying, there are tradeoffs between payload, range, speed, and costs. The vast breadth of aircraft types for sale today belies the simple fact that many persons prioritize these tradeoffs differently. However, the variations in avionics software development practices are much more constrained: everyone wants to minimize the following attributes:

- Cost

- Schedule

- Risk

- Defects

- Re-use Difficulty

- Certification Roadblocks

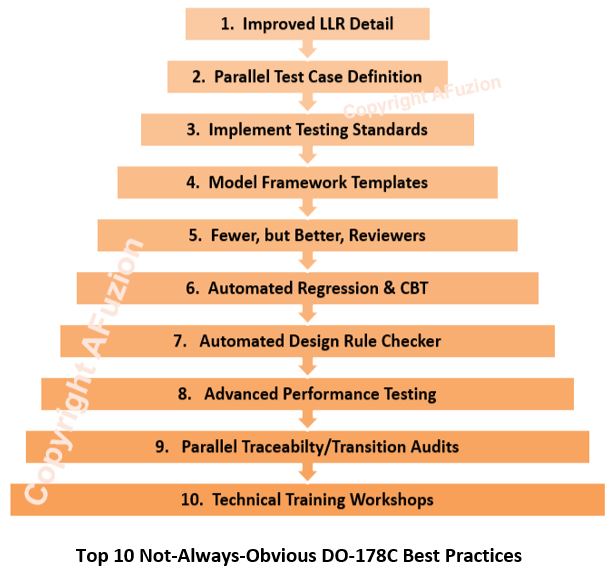

The following pages provide the Avionics Software Guidelines Best Practices which can minimize all six of these important attributes in your development.

Certain good avionics software development practices are self-evident. Like improving human health, educated people know that improved diet, exercise, sleep, and stress relief are all “best practices”. For software, the apparent good practices include utilizing defect prevention, experienced developers, automated testing, and fewer changes. This paper isn’t about the obvious, as it is assumed the reader is educated by virtue of making it to this page. Instead, the Avionics Software Guidelines Best Practices identified herein are subtler and considerably “less practiced”.

Improved LLR Detail

Requirements are the foundation to good engineering. Detailed requirements are the foundation to great engineering.”

Smarter researchers than this author long ago proved that most software defects are due to weak requirements. In the book Mythical Man Month, Brooks opined that assumptions were a leading cause of software defects. Avionics Software Guidelines was intentionally strengthened over its predecessor DO-178B to ensure acceptable requirements via Avionics Software Guidelines mandate to trace structural coverage analysis to requirements-based tests (RBT). Remember: Avionics Software Guidelines doesn’t provide strict requirements standards, but for DAL A, B, and C, the developer must. Those standards should define the scope and detail associated with High-Level Requirements (HLRs) and Low-Level Requirements (LLRs). Ideally, the Requirements Standard will include examples of HLRs versus LLRs. Requirements review checklists should likewise contain ample criteria for evaluating the level of detail within low-level requirements.

Parallel Test Case Definition

If a Tester cannot unambiguously understand the meaning of a software requirement, how could the developer?

Avionics Software Guidelines is agnostic regarding cost and schedule: the developer is freely allowed to be behind schedule and over budget. While transition criteria must be explicitly defined for all software engineering phases, it is normal for companies to define their test cases after the software is written. However, great companies define test cases before code is written. Why? Because it’s better to prevent errors than detect them during testing. If a Tester cannot unambiguously understand the meaning of a software requirement, how could the developer? Good companies verify requirements independently by having the software tester define test cases as part of the requirements review before any code is written. Requirements ambiguities or incompleteness are corrected earlier, yielding fewer software defects and expedited testing.

Automated Regression & CBT

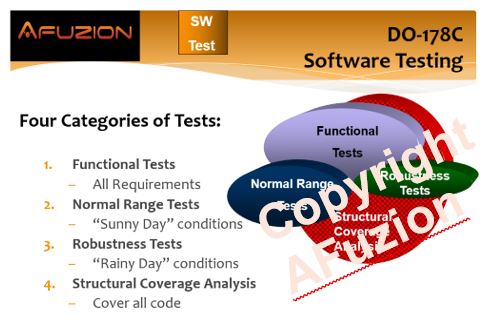

In the non-critical software world, testing is a “great idea”. Purchasing an exotic car or yacht can also seem like a great idea at acquisition time, as this author has personally experienced. However, unlike luxury cars and yachts, software testing should not be considered a luxury but rather a necessity. Avionics Software Guidelines require a variety of necessary testing, with increased rigor per increased criticality. The basic types of testing are depicted below:

Avionics Software Guidelines require regression analysis whereby software updates are assessed for potential impact to previously tested software with mandatory retest required where potential impact exists. Over the project life, and absolutely over the product life, more time will be spent on testing than on development. Many consider software testing to be the largest line-item expense in Avionics Software Guidelines. Devoting upfront time to develop a test automation framework can provide the single largest expense reduction ability. And continuous-based testing (CBT), per Avionics Software Guidelines which automatically retests changes continuously, is the best means to meet Avionics Software Guidelines regression objectives. Why? By continuously retesting all software the regression analysis is greatly simplified: just repeat all the tests by pressing a button. Voilà.

Implement Testing Standards

Requirements Standard. Design Standard. Coding Standard. Testing Standard … Wait, there ISN’T a Testing Standard?!?

Avionics Software Guidelines explicitly require standards for DAL A, B, and C. Which standards? Requirements, Design, and Code. Why don’t Avionics Software Guidelines require a verification or testing standard? Supposedly there should be less variation within testing, compared to the preceding lifecycle phases which are admittedly more variable between companies and projects. No one has ever accused Avionics Software Guidelines of requiring too few documents; given the traditional waterfall basis (inherited two decades prior from DO-178A), ample documents are required already. However, efficient companies recognize that verification is an expensive and somewhat subjective activity best managed via a Software Test Standard. Since not formally required, it would not have to be approved or even submitted. What would such a hypothetical Software Test Standard cover? At a minimum, the following should be included, as excerpted from AFuzion’s basic Avionics Software Guidelines training 2-day class provided to over 23,500 engineers worldwide:

- Description of RBT to obtain structural coverage;

- Details regarding traceability granularity for test procedures and test cases;

- Explanations of structural coverage assessment per applicable DAL(s);

- Definition of Robustness testing, as applied to requirements and code (per applicable DALs);

- If DAL A, explanations of applicable MCDC and source/binary correlation;

- Coupling analysis practices including the role of code and design reviews;

- Performance-based testing criteria;

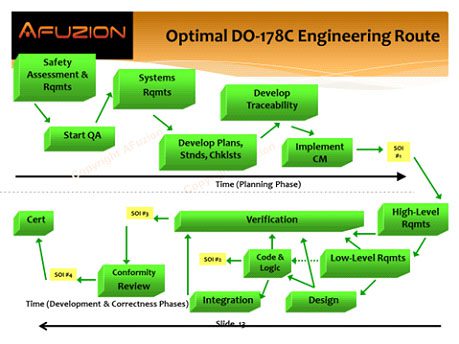

Avionics Software Guidelines Optimal Engineering Route

While the Avionics Software Guidelines engineering path is seemingly vague, hundreds of Avionics Software Guidelines software projects affirm that the following software lifecycle is optimal for Avionics Software Guidelines:

Figure: Optimal Avionics Software Guidelines Engineering Route per AFuzion

Parallel Traceability/Transition Audits

“Why do it right the first time when it’s fun to keep doing it over and over…” – Anonymous

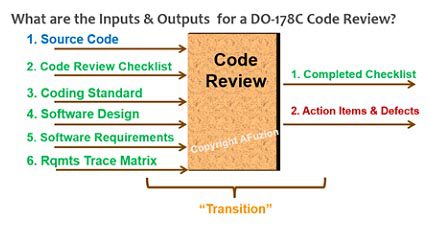

Where the amateur athlete focuses on the end result, the professional instead focuses on optimizing the technique since the end result depends upon that technique. Amateur and professional Avionics Software Guidelines software engineers both know minimizing defects is a goal, but the professional knows that technique matters: in avionics that is best summarized via Avionics Software Guidelines traceability and transition criteria. While the amateur avionics team assesses traceability and transition criteria at the end, e.g. Avionics Software Guidelines SOI-4, the experienced team instead deploys proactive SQA and tools to monitor bi-directional traceability continuously. Emphasize audits of transition criteria early, fix process shortfalls, and record the audit results. Remember, each type of Avionics Software Guidelines artifact review constitutes a “transition”: engineers must follow the defined transition criteria and QA must audit to assess process conformance. For information on Avionics Software Guidelines audits and auditing companies, see here.

An example of an Avionics Software Guidelines Software Code Review transition is depicted below: ensure all the inputs and outputs are perfectly utilized, under CM, and referenced in the Avionics Software Guidelines review results:

Figure: Optimal Avionics Software Guidelines Code Review Transitions per AFuzion

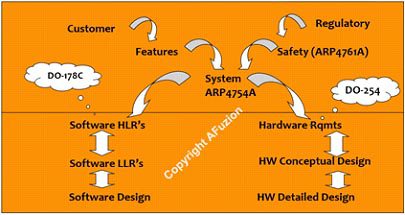

Avionics Software Guidelines Requirements Decomposition

Avionics Software Guidelines require sequential requirements decomposition as depicted in the Figure below. The relationship of Avionics Software Guidelines software requirements to Avionics Software Guidelines Safety Requirements, Aircraft/Systems Guidelines Requirements, and DO-254 Hardware Requirements is depicted in this Figure:

Figure: Optimal Avionics Software Guidelines Requirements Decomposition per AFuzion

The top 10 Avionics Software Guidelines Best practices will typically yield a 15-20% cost reduction (download the rest of this free AFuzion Avionics Software Guidelines Best Practices paper to read further).

To download the remaining 9 pages of this technical Avionics Software Guidelines AFuzion Best Practices whitepaper, please download below:

Download Full 10+ Page DO-178C Best Practices Whitepaper

Information Request Form

Please provide the following information to receive your full WhitePaper

OTHER FREE RESOURCES

- Free 30-minute tech telecon to answer any of your tech Q’s

- Free Sample Certification Checklist

- Free AFuzion Training Video Sample

- Request invitations to future AFuzion tech webinars, fee waived (free)

Click Here For Other Free Resources