DO-178C – Common Gaps & How To Close Them

Download Full 10+ Page DO-178C Gap Whitepaper

DO-178C is increasingly required world-wide for almost anything that flies: commercial and military aircraft, missiles, drones, even some balloons … Developing avionics for these aircraft is increasingly complex and expensive particularly when complying with DO-178C.

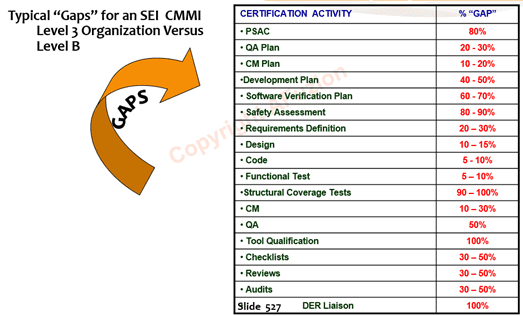

The following AFuzion figure (from AFuzion’s DO-178C Training) summarizes the common gaps of a DO-178C DAL B project versus a CMMI Level 3 company’s first project:

Figure: Common DO-178C Gaps per AFuzion Training

Here is a reverse countdown of the common DO-178C gaps, but first:

For free membership in the world’s largest Avionics Hardware DO-254 User Group, please click here: https://www.linkedin.com/groups/1830071/

For free membership in the world’s largest Avionics Software DO-178C User Group, please click here: https://www.linkedin.com/groups/4102798/

Gap #19: Inappropriate Tool Qualification.

DO-178 requires that certain tools be qualified in order to use them to their full ability. Tools are categorized per one of five “Criteria”, with Criteria 1 being the old DO-178B “development” tools (those whose output is directly associated with software) and Criteria 3 being the old DO-178B “verification” tools (those whose output assesses software). Qualification is required when a tool augments, replaces, or automates one of the software lifecycle steps required by DO-178. Often Tool Qualification is performed on compilers, source control, or other tools when in fact such qualification is not even required. Other times qualification is skipped for tools which require such and the entire certification is subjugated. For more information on DO-178C Tool Qualification, see the specific Afuzion whitepaper. For Cyber-Security DO-326A Gap tutorial and training video, freely view here:

Gap #18: Insufficient PSAC.

The DO-178C PSAC (Plan for Software Aspects of Certification) is the cornerstone document for every avionics software certification. Each system normally requires its own PSAC and there may be additional PSACs for various software components within the system. The PSAC is one of the few DO-178 required software documents (there are over two dozen necessary for each project) that must be submitted to and approved by the certification authorities (FAA for civil aircraft, military for defense-related aircraft and UAVs). Remember, the DO-178C PSAC forms the agreement with the authority regarding certification strategy. As such, the PSAC should clearly state all certification rationale, tools and tool qualification strategies, COTS software, high level system architecture, responsibilities, and schedule aspects. And, the producer should obtain approval prior to meaningful additional software development work or be willing to stomach substantial certification risk in the absence thereof. Also, the PSAC should clearly state how ARP4761A and ARP4754A will be applied continuously throughout the project for safety and systems considerations respectively. A good PSAC template, review criteria, and a technical writer on staff are some error proofing suggestions; see here for an AFuzion DO-178C PSAC Template: https://afuzion.com/plans-checklists/#do-178c-plan-templates

Gap #17: Missing Robustness Test Cases.

DO-178 requires “Robustness” testing, whereby both requirements (DAL’s A – D) and code (DAL’s A – C) need to be tested for potential abnormal scenarios. What is “abnormal”? Boundary values, error values, all state transitions including potential invalid transitions, timing, performance testing, etc. For DO-178C DAL’s A – C, this requires considering the code as well: test cases need to trace to requirements but the test cases need also consider code constructs. All of these comprise areas of weak or missing DO-178C test cases on many programs.

Gap #16: Applying Formal Methods without Formal Notation.

DO-333 is a relatively new guideline which governs how formal methods may be applied within software development on DO-178C (and DO-254 or DO-278A projects) to augment or replace some forms of verification. (Remember, Verification equals Reviews, plus Tests, plus Analysis). However, it is insufficient to merely claim application of formal methods to normal software requirements, design, or source code. Instead, formal methods are called “formal” because they must be based upon a formal “notation”, e.g. a scientific language which ensures mathematical closure. Thus, the DO-333 formal notation can inherently ensure all inputs/outputs are contained and addressed within a given algorithm. Formal methods are then applied to those algorithms, generally not the entire program on most DO-178C projects employing formal methods.

Gap #15: Missing “No Unwarranted Changes” CM Step.

DO-178C (and DO-254 / DO-278A) requires good software configuration management (CM). While not required, virtually all successful DO-178C / DO-254 avionics projects utilize a commercial CM tool. These tools range from overly simple and minimally useful, to overly complex, burdensome, and expensive. However, all DO-178C RTOS’s have one attribute in common: none checks or enforces DO-178’s requirement that the software defect correction process ensures that no unwarranted changes are made during the correction of a defect. What are unwarranted changes? Any change not specifically associated with, or cited, in the corresponding problem report. How are unwarranted changes prevented? Manually, via the reviewer (preferably independent; independence is required for the higher criticality levels of DO-178C). The reviewer should merely compare “before vs. after” (with the assistance of an electronic “diff” comparing the digital items (requirements, source code, tests, etc.) and ensuring that the only changes made directly pertain to the problem or anomaly described in the corresponding problem report. For DO-178C RTOS training details, click here: https://afuzion.com/private-training/safety-critical-real-time-operating-systems-rtos-training/

Gap #14: Not Detecting Coding Errors Early.

DO-178 is designed to minimize, mitigate, and detect coding errors. However, DO-178 is budget-agnostic: avionics producers are free to expend as much money as they desire in their pursuit of certification. The marketplace, however, is different. Studies have shown that the cost of fixing a bug in the formal test phase is 5-10 greater than detecting and correcting the bug during the coding phase. DO-178C Coding standards, peer reviews with detailed checklists, and unit testing are all important steps. However an often overlooked step is static code analysis via a commercial code-checking tool. In virtually every case imaginable, the cost of applying such a static code analysis tool is less than the downstream cost of not applying it. Advanced static code analysis tools can provide continuous automated code reviews with each check-in to verify that the change did not cause a new problem (recursion, violate cyclomatic complexity limits or standards). For a DO-178C coding standard template, click here: https://afuzion.com/plans-checklists/#do-178c-plan-templates

Gap #13: Not Using a Commercial Certifiable RTOS.

In the past decade, complex avionics have increasingly made use of a real-time operating system (RTOS), particularly when DO-178C certification is required. Previous, or trivial, projects make do with a simple kernel or executive/poll-loop which controls program execution. However, the strong trend toward rapid development, extensible and re-usable designs, third-party libraries and drivers, communication protocols, partitioning, safety, and certifiability all mandate the need for a commercial RTOS. There are over fifty providers of commercial RTOS’s and all have their strong points and suitable applications. Consider performing Rate Monotonic Analysis (RMA) to further error proof your RTOS in the test readiness phase. For DO-178C, there are 15-20 primary criteria applicable to RTOS selection (see AFuzion’s DO-178 training materials for complete information). RTOS certification can require years and cost millions of dollars, hence the need for pre-certified (“certifiable”) RTOS’s, of which there are approximately fifteen available (for a list, email [email protected]).

Gap #12: Lack of Automated Testing.

Testing is a key aspect of DO-178C. It is common for DO-178C (and DO-254) projects to expend more hours on testing than on coding. Whereas DO-178C software coding is (or should be) given the full benefit of modern software tools, testing is often an afterthought with very little consideration given to tools and productivity. Quality does not inherently improve via testing on a DO-178C avionics project; instead, quality is measured and then the process can be improved, which then improves quality. Testing will be performed dozens, and potentially hundreds, of times on the same items over the life many-year life of the product and covering many evolutions. And the best DO-178C regression-test strategy is to retest as much of the software as possible, automatically. Thus, testing should be automated for all but the simplest of products. The test team should be involved in all requirements reviews, design reviews, and code reviews to ensure these artifacts are testable and to prepare automated test cases as early as possible.

Gap #11: Lack of Path Coverage Capture During Functional Tests.

DO-178C Structural coverage (commonly denoted as “path coverage”) is required to an increasing degree for Level C, B, and A software. (Path coverage is not required for Level D, and no DO-178 process steps are required for Level E). However, path coverage is usually sought and obtained after the other forms of required DO-178 testing are achieved. This is extremely inefficient and unnecessary. Instead, the software environment and test suite should be considered in advance and path coverage obtained during black-box testing of requirements (e.g. “functional testing”). Remember, when you instrument the software to obtain path coverage data within DO-178C, you need to execute the tests twice: once with instrumentation and once without, to perform test result correlation and to affirm the instrumentation did not mask test failures.

Gap #10: Excessive Code Iterations.

It is normal for a new DO-178C or DO-254 (even DO-278A) project to contain a code baseline with multiple iterations. However, most projects greatly exceed any reasonable number of iterations because the software development is viewed as an iterative process instead of an engineering process. Software creation does not necessarily imply software engineering; however, it should. Excessive code iterations result from one or more of the following deficiencies: insufficient requirement detail, insufficient coding standards, insufficient checklists. DO-178 coding standards and checklists are available from a variety of sources. Perform a Google search on “DO-178” for more information or see AFuzion’s here: https://afuzion.com/plans-checklists/#do-178c-plan-templates. In DO-178C, QA should monitor for and report on excessive code versions since it may indicate poor design definition, weak requirements, or overly complex requirements.

Gap #9: Inadequate and Non-Automated Traceability.

Traceability, both top-to-bottom and bottom-to-top, is required for DO-178 certification. Top-to-bottom traceability ensures that system level requirements, software requirements, software code, and software tests are complete. Bottom-to-top traceability ensures that the only present functionality is that specified by requirements. Traceability should be audited along the way to affirm appropriate reviews were performed. Traceability must be complete and audited as such during the conformity review just prior to certification. However, tremendous project management and productivity efficiencies may be had by achieving accurate traceability early in the project and continuously thereafter. Also, avionics development increasingly uses models via model based development (MBD). When models are used, traceability must be provided “through” the model, meaning traceability must show the requirements upon which the model was based, the model elements which embody those requirements, and the code/tests which then follow those model elements. And traceability will be required through the life of the product, often several decades. Except for the smallest project (under 2-3K LOC), traceability should be semi-automated via a custom or commercial tool such as IBM DOORS, Polarion, or Jama. Remember, traceability is never fully automated: it must still be reviewed per DO-178C and DO-254.

Gap #8: Avoiding Modeling Due to Lack of Qualified Modeling Tools

Modeling is increasingly used in complex systems development including avionics and aerospace software development per DO-178C. The advantages are so numerous that applying modeling is the subject of another whitepaper by this author. However, many DO-178C and DO-278A developers avoid modeling because they do not have, or do not want to use, a modeling tool which is qualified per DO-178C and DO-331. Admittedly, the major modeling tool choices are not qualified because the manufacturers of those tools do not want to continuously have to recertify their tools or they believe there is limited return on investment (ROI). However, modeling can (and often should) be used even if the preferred modeling tool is not qualified. Remember, modeling tools are tools. Tools only need to be qualified in DO-178C per DO-330/DO-331 if the tool output is not otherwise verified. How do you verify the output of a modeling tool? Simple: review and test the outputs of the modeling tool, namely the model itself. If code is automatically generated from the model (an increasingly common choice within avionics), then simply review and test that code as if it was handmade, not model made. Voila: no modeling tool qualification is required for DO-178C compliance in such a case.

To download the remaining top 7 DO-178C gaps, just click the Download button below.

What are the common DO-178C gaps, what are the gaps versus DO-178B, and how best to close these gaps? These answers and more are answered within this AFuzion technical whitepaper; AFuzion has performed more than 80 DO-178C Gap Analysis in 25 countries for 60 avionics development clients worldwide; more than all other current Gap Analysis company’s existing personnel, combined. For free information on DO-178C Gap Analysis (or to query about engaging AFuzion to perform one for you), simply see https://afuzion.com/gap-analysis/

Download Full 10+ Page DO-178C Gap Whitepaper

Information Request Form

Please provide the following information to receive your full WhitePaper

OTHER FREE RESOURCES

- Free 30-minute tech telecon to answer any of your tech Q’s

- Free Sample Certification Checklist

- Free AFuzion Training Video Sample

- Request invitations to future AFuzion tech webinars, fee waived (free)

Click Here For Other Free Resources